From Command Allowlists to Governance: What Agent Security Is Missing

Some of the most responsible agent users today are doing something that should feel familiar to any security-minded engineer: they’re building real controls, by hand, around a system that otherwise operates with implicit authority.

Not better prompts. Not vibes-based trust. Actual controls.

A recent hardening thread by Jordan Lyall on X is a great example of this approach in practice. It’s worth reading — and worth understanding why it works, and where it breaks down.

Safety currently depends on discipline

The thread is impressive because it treats an agent like what it actually is: software with real access to systems, credentials, and meaningful blast radius.

The approach isn’t “trust the agent.” It’s “assume it will eventually do something you didn’t intend, and put boundaries in place before that happens.”

If you step back, nearly every mitigation in that setup exists to answer a single question:

How do I stop my agent from doing something I didn’t explicitly approve?

That’s the right question. The problem is that answering it today requires constant human effort.

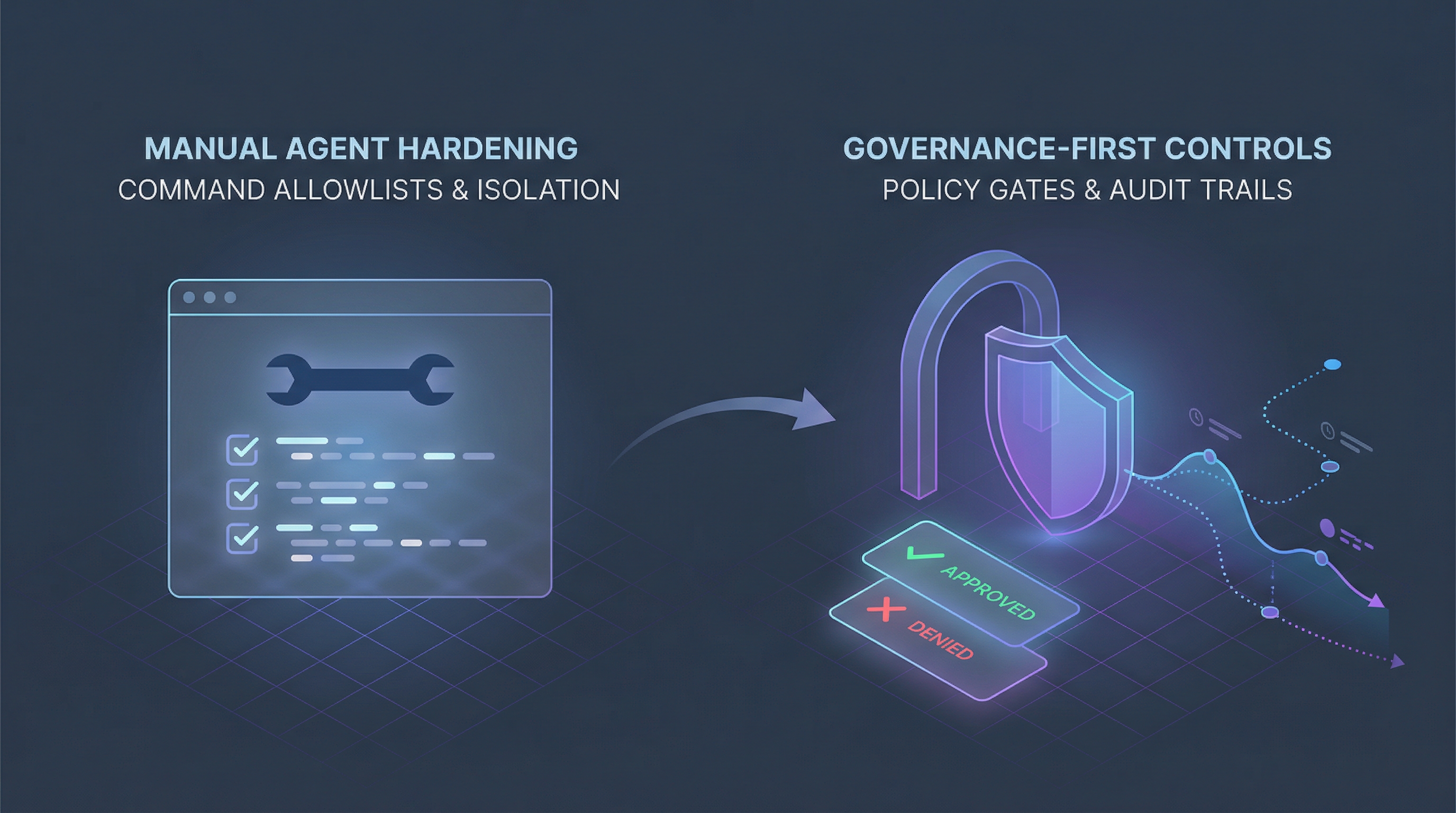

What people are building manually

In practice, manual agent hardening typically includes a stack like this:

- Command allowlists to reduce arbitrary shell execution.

- Read-only tokens to limit damage from prompt injection or tool misuse.

- One-way data flow to avoid hidden side effects and keep outputs reviewable.

- Skill skepticism (especially around marketplace content) to reduce supply-chain exposure.

- Manual audits and logs to create some level of visibility.

- Human discipline as the final approval gate.

This works. It should be respected. But it’s also fragile, human-dependent, and hard to scale across teams or organizations.

The missing layer: pre-execution governance

The hardening stack above is compensating for a design gap:

Agents and their skills commonly operate with implicit authority — they can do whatever they can reach.

If an agent can execute actions, governance must exist before execution — not after. The fact that a log captures what happened doesn’t help if the damage is already done.

This is what governance-first architecture changes. Execution stops being “whatever the agent can reach” and becomes “only what was explicitly requested, evaluated, and allowed.”

What Clasper Core enforces by default

Clasper Core is designed to formalize the same instincts behind manual hardening, but at the control-plane level — so that teams don’t have to reinvent these patterns for every agent, every project, or every new team member.

Implicit shell access becomes explicit execution requests

Instead of hoping the agent doesn’t run something dangerous, the model changes:

- A capability (e.g.,

shell.exec) must be declared up front. - Intent and context must be explicit in the request.

- Policy is evaluated before any side effects occur.

- High-risk actions can require human approval before proceeding.

This shifts the control surface from “inspect the exact command string” to “govern the capability, intent, and context.” It’s a fundamentally different posture.

Command allowlists become capability + intent governance

Allowlists are useful, but they tend to be brittle:

- They grow over time as new commands are needed.

- They’re hard to maintain consistently across teams.

- They don’t model intent — why is this command being run?

Governance-first control evaluates intent and context up front:

{ "requested_capabilities": ["shell.exec"], "intent": "install_dependency", "context": { "external_network": true, "writes_files": true }}That enables policies like:

- “

shell.exec+external_network=truerequires approval” - “marketplace provenance +

shell.execis denied”

No command parsing. No regex. Just structured governance over what the agent is actually trying to do.

”The agent promised” becomes enforceable policy

Prompt discipline, instruction files, and behavioral constraints all matter — but they’re guidance. The agent can still do the thing it promised it wouldn’t.

Governance is authority. It closes the gap between what the agent should do and what it can do.

Fear-based expansion becomes approval-based expansion

Manual hardening often leads to a cautious pattern: don’t add capabilities until you feel safe, keep the agent constrained indefinitely, and treat expansion as a risk you avoid rather than a decision you make deliberately.

Governance-first systems support controlled expansion:

- Explicit approvals for high-risk actions.

- Time-bounded authorization that expires automatically.

- Audit trails for every decision, so expansion is traceable, not just hoped-for.

What Clasper Core does not replace

Good engineering remains good engineering. Clasper Core does not replace:

- Machine isolation

- Network segmentation

- OS hardening

- Token scoping and key hygiene

- Rate limits and budget controls

Clasper Core is not a sandbox. It’s a governance layer. In a serious production setup, these layers complement each other — defense in depth still applies.

Manual vs. governed: same goals, different failure modes

| Concern | Manual hardening | Governance-first (Clasper Core) |

|---|---|---|

| Shell access | Command allowlist | Policy gates on capability + intent |

| Prompt injection | Token scoping + discipline | Execution denied even if the agent is prompted |

| Marketplace risk | Avoidance + vigilance | Provenance-aware policy decisions |

| Approval gating | Human discipline | Async approvals with decision records |

| Visibility | Logs and ad-hoc audits | Tamper-evident audit chain with exports |

| Trust | Gut feel | Trust status backed by evidence |

The takeaway

Running an AI agent safely today requires deep systems knowledge and constant vigilance. That’s a testament to the people doing it well — but it’s not a sustainable model.

That’s not a user problem. It’s an architecture problem.

If an agent can execute actions, then governance must happen before execution, and authority must be explicit — not assumed, not hoped for, and not dependent on someone remembering to check the logs.

Clasper Core exists to make that the default.